AI and the missing first step in learning

What could be the long-term impact of AI on career progression in knowledge work?

I saw a post recently asking whether AI will replace junior Scrum Masters. That question itself is not new. But it triggered a realization I had not connected this way before.

And it is not very comfortable.

How people actually learn a profession

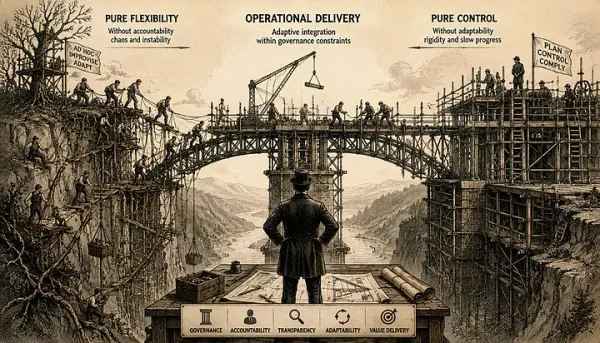

Most professional growth follows a pattern often described as Shu–Ha–Ri, a concept from Japanese martial arts.

Shu: Follow the rules

Ha: Break the rules

Ri: Transcend the rules

At the Shu level, the focus is simple: repeat established patterns, follow instructions, execute known routines. It is not creative work. It is not glamorous. But it is where understanding is built.

This is not just philosophy. It aligns with what we know from learning science.

In “Make It Stick”, researchers emphasize that durable learning comes from active recall, repetition, and practice under constraints, not from passive exposure. In “Peak”, the idea of deliberate practice highlights that expertise is built through structured repetition with feedback, often on tasks that are not interesting but are essential.

In other words, people learn by doing the boring parts first.

That is Shu.

Where AI fits into this

Now look at what AI does best.

It follows patterns. It applies rules consistently. It executes structured tasks quickly and cheaply.

In other words, it operates extremely well at the Shu level.

And that creates a structural shift.

The first trap: disappearing entry points

We already see early signals.

- Junior developer tasks being automated or heavily assisted by tools like GitHub Copilot and similar systems

- First-line support, documentation, and coordination roles being reduced or redefined

- Routine analysis, reporting, and content production increasingly handled by AI

Whether or not entire roles disappear is still an open question. But one thing is clear: the nature of entry-level work is changing.

The simplest interpretation is efficiency. The deeper implication is learning.

If AI handles Shu-level work faster and cheaper, the traditional entry points for learning start to disappear.

The second trap: even when we try to keep them

Let’s assume we recognize the problem and try to preserve those learning paths.

Now we face a different issue.

How do you justify asking a junior person to manually perform repetitive, structured work when AI can do it faster, cheaper, and often better?

Even if you want to protect the learning experience, the economic pressure works against you.

And this is where the second trap appears.

Not only do the opportunities shrink, but the motivation to maintain them weakens.

Why this matters more than it looks

For those of us who have already gone through Shu and Ha the hard way, AI is an advantage. It removes routine work and amplifies productivity.

But it also raises a question that is easy to ignore:

Who comes next?

How will people develop real understanding if they skip the stage where they learn by doing?

Not just knowing what to do, but developing the “feel” for the system. The ability to recognize patterns, detect anomalies, and understand consequences beyond instructions.

Those are not abstract skills. They are built through repetition, mistakes, and correction.

Remove that stage, and you risk creating a generation that can operate tools, but not understand systems.

What people predict vs. what we should watch

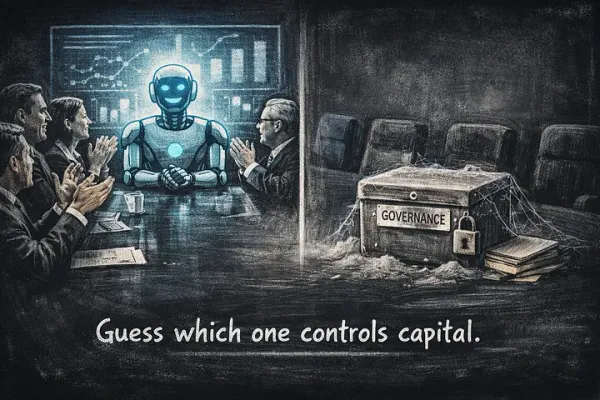

A lot of current discussion focuses on whether AI will “replace jobs.”

That framing is too shallow.

The more important question is:

What happens to the learning pipeline?

Because even if senior roles remain, they depend on people who once went through Shu.

If that layer erodes, the system does not break immediately. It degrades over time.

If this sounds familiar, it should. WALL-E showed a version of this long before AI became real. People surrounded by machines that optimize everything for comfort, until they lose the ability to do even the simplest things themselves. It looked like satire. But the mechanism is the same. When capability is consistently delegated, it does not stay preserved, it degrades. And the problem is not the machines. It is the absence of intentional friction where learning used to happen.

Not a conclusion, but a responsibility

This is not a criticism of AI. It is doing exactly what it is supposed to do.

It is also not a call to “stop using AI.” That would make no sense.

But it does highlight something we have not fully addressed yet.

If the first stage of learning is being automated away, then it has to be redesigned intentionally.

Otherwise, we may solve today’s efficiency problem by quietly creating tomorrow’s capability gap.

It is still early enough to think about it.

The question is whether we will.