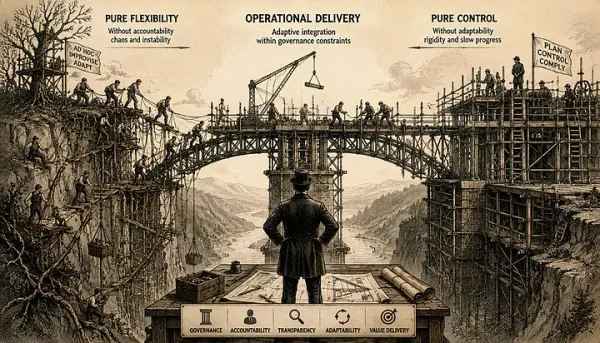

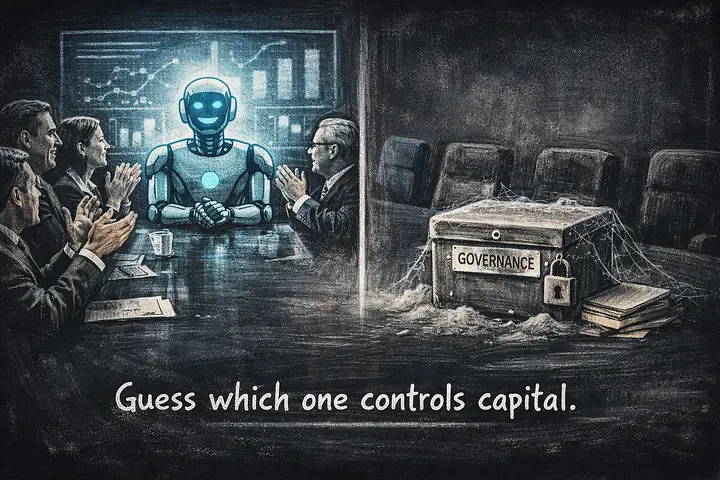

AI does not fail because of models. It fails because of governance.

A 2025 BCG analysis cited by Business Insider suggests that only around 5% of companies extract meaningful value from AI investments. The rest experiment, present, and absorb cost.

The instinctive explanation is technical immaturity. Models are new. Tooling evolves quickly. Talent is scarce. That explanation is convenient.

The DORA 2025 State of AI-Assisted Software Development report shows something more structural. When governance exists, system dynamics look different. When it does not, the system degrades in predictable ways.

This is not about enthusiasm. It is about measurable flow.

Governance shows up in delivery physics

In environments with effective governance, lead time for changes clusters between 1 day and 1 week. In low-governance environments, it stretches to 1 month or more. That is not a cosmetic difference. It reflects decision latency, approval friction, and unclear ownership. Rework rates tell a similar story. Where governance is weak or absent, rework exceeds 30%. Where governance is present, it stays below 15%. Rework is not a minor technical inefficiency. It is capital being spent twice.

By the way, if you are looking to manage rework, Amplio has built their around reducing avoidable rework because it is one of the most expensive hidden taxes in software delivery. When rework crosses 30%, you are not innovating. You are burning the budget.

And AI amplifies this dynamic. Without governance, AI accelerates output and multiplies rework. With governance, AI reduces cycle time without destabilizing the system.

The difference is not the model. It is the decision structure around it.

Demo style versus governed production style

Most AI initiatives start in what I call demo style. Small team. Fast iteration. Impressive prototype. Early excitement. Limited integration. No explicit financial accountability. In demo style, success is measured in capability: “It works.” “Accuracy improved.” “Adoption is growing.”

Governed production style looks different.

- Clear financial hypothesis before launch.

- Named owner of downside risk.

- Defined kill criteria.

- Integration into capital planning, not innovation theater.

- Operational metrics tied to business flow.

The DORA data reinforces this distinction. High-performing environments exhibit shorter lead times, lower rework, and more stable delivery patterns. Those outcomes do not emerge from enthusiasm. They emerge from disciplined governance.

AI without governance increases variance. Governance converts variance into signal.

Start with measurement, not tooling

If governance is clarity, then measurement is its instrument.

Scrum.org’s Evidence-Based Management framework offers a practical starting point. It separates value into four domains: Current Value, Time-to-Market, Ability to Innovate, and Unrealized Value. Applied to AI, this forces harder questions.

Here are examples of AI-relevant metrics in two critical domains:

Released value indicators

- Reduction in lead time for changes from 4 weeks to 5 days after AI-assisted code review.

- Decrease in rework rate from 32% to 14% following introduction of AI-supported test generation.

- Measurable reduction in defect escape rate in production.

- Direct cost savings in support operations after AI-driven triage automation.

If those numbers do not move, value has not been released.

Unrealized value indicators

- Percentage of high-friction workflows not yet supported by AI.

- Estimated revenue opportunity from reducing customer onboarding time.

- Opportunity cost of manual compliance reporting still performed without automation.

- Gap between current feature throughput and market demand.

Unrealized value quantifies what could improve if the system were governed and scaled correctly. It prevents teams from mistaking activity for impact.

The important distinction is this: demo-style measures capability. Governed production style measures system impact.

Governance is not oppression

Governance does not slow innovation. It defines consequence.

In DORA terms, governance correlates with almost every key indicator. In capital terms, it determines whether AI improves revenue, margin, or cash flow.

Without governance, AI becomes an optional accessory. With governance, it becomes part of the operating model.

That shift requires discomfort. Financial thresholds must be defined. Projects must be killed when metrics do not move. Executives must own downside risk, not just upside narrative.

Only a small percentage of organizations accept that level of consequence. That is consistent with the 5% extracting meaningful value. The rest remain in demo mode.

If you are serious about AI, start here:

- Define one financial metric AI must move within 6–12 months.

- Define the minimum acceptable impact threshold.

- Name the accountable owner.

- Measure lead time and rework before and after introduction.

Then decide if you are governing AI, or simply experimenting with it.

Rethink the model. Then decide if you are serious.

p.s. At Robust Agile, we help organizations establish governance for AI initiatives so that experiments translate into measurable business impact. If your AI efforts need clearer accountability, capital discipline, and delivery stability, that is the work we do.