Risk Management under Real Velocity: Push Detection Downstream, Limit Blast Radius

I was recently quoted in a TechBullion article on balancing speed and security in business risk management. The core idea was simple: increasing business velocity does not remove the need for risk management. It changes its economics.

This is not a technical nuance. It is a decision problem. At higher velocity, both the cost of failure and the cost of inaction increase. Mistakes surface faster. Consequences propagate wider. Delays become more expensive.

Yet most organizations still treat risk as if they operate in a slower world.

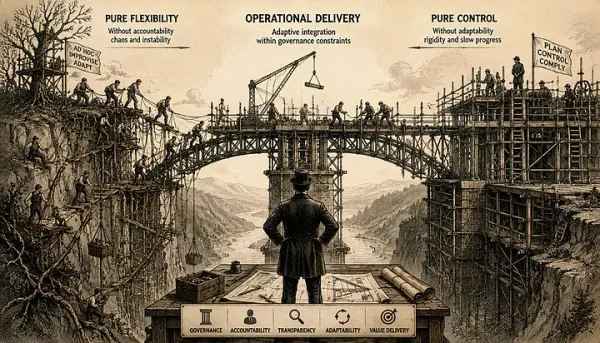

The misleading trade-off

Risk and speed are often presented as a trade-off. Move faster, accept more risk. Move slower, stay safe.

In practice, this framing breaks down quickly.

Faster systems do not just increase risk. They increase exposure to both failure and delay. The system becomes less forgiving in both directions. The real problem is not speed. It is applying slow-world risk models in fast systems.

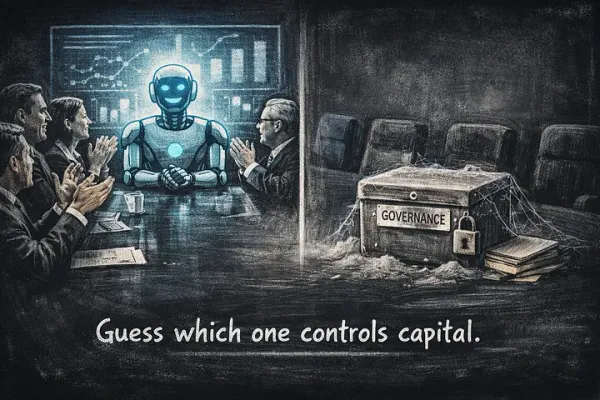

Where traditional control fails

Traditional risk management relies on assumptions that no longer hold:

- that risks can be identified upfront

- that plans remain stable long enough to execute

- that control can be centralized

In high-velocity environments, these assumptions degrade. Plans become outdated before they are completed. Unknown risks dominate known ones. Decision volume exceeds what centralized control can handle.

At this point, many teams draw the wrong conclusion. They see that predictive control does not work and treat that as permission to reduce discipline.

This is where systems start to fail. The failure of prediction is mistaken for permission to stop managing risk.

A different approach: Defensive Delivery

The alternative is not less risk management. It is different risk management.

What we use is a model we call Defensive Delivery.

It starts with a simple premise:

Problems are inevitable. The question is when they are detected and how costly they are when they surface .

From that premise, two principles follow.

Detection must move closer to action

Risk cannot be managed effectively if it is detected late. In high-velocity systems, the distance between decision and feedback must be as short as possible.

This requires:

- making assumptions explicit early

- validating continuously, not at the end

- treating deviations as signals, not noise

This is not about empowering teams in an abstract sense. It is about placing responsibility for detection where decisions are actually made. The closer detection is to action, the lower the cost of correction.

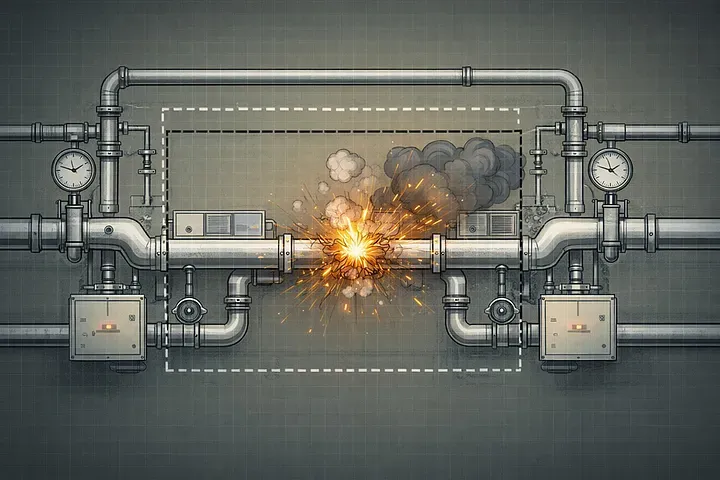

You cannot eliminate failure. You can control its cost.

The second principle is often misunderstood.

Failure cannot be fully prevented in complex systems. Attempting to do so usually leads to either paralysis or illusion. What can be controlled is the blast radius.

This means designing systems so that failures:

- remain local

- do not cascade across components

- can be observed and corrected early

Small, contained failures are manageable. Large, systemic failures are not.

Example: high velocity under real constraints

A recent example comes from building a multi-asset trading SuperApp, as featured in FinTech Magazine (August 2025).

The environment combined everything that makes risk management difficult:

- accelerated delivery timelines

- strict regulatory expectations

- direct exposure to end users

- multiple vendors and legacy systems

A front-facing trading platform is continuously probed. Any vulnerability is discovered quickly. Any malfunction affects trust immediately. Any failure across systems appears as a single failure to the user.

In this context, risk is not theoretical. It is immediate and visible.

Traditional approaches do not scale here. Late validation is too late. Centralized control is too slow. Complete upfront specification is unrealistic.

The only workable approach was to apply Defensive Delivery in practice.

Detection was pushed as close as possible to execution. Assumptions were surfaced early. Validation was continuous.

At the same time, the system was designed to limit the impact of failure. In a flow where a single user action triggers multiple coordinated backend operations, every component is effectively mission-critical.

That reality cannot be removed. But its consequences can be contained.

The result was not elimination of risk. It was control over how risk manifests.

What this changes for leadership

This shift is not primarily technical. It is managerial. Leadership does not lose control. It changes how control is exercised.

Instead of attempting to predict and approve every decision, leadership must:

- define acceptable risk boundaries

- make trade-offs explicit

- ensure decisions are grounded in what is known at the time

The goal is not perfect decisions. It is decisions that are appropriate for the situation and can hold under pressure.

What this is not

This approach is often misunderstood.

It is not:

- an excuse for chaos

- decentralization without accountability

- speed at any cost

It is governance adapted to reality.

Not control through prediction, but control through clarity, feedback, and containment.

Closing

Speed does not remove the need for risk management.

It removes the illusion that we can avoid it. The discipline remains the same. The mechanism must change.

Push detection downstream. Limit the blast radius. And make decisions you can stand behind.